- 入门教程

- 分类教程

- 回归教程

- 聚类教程

- KNN教程

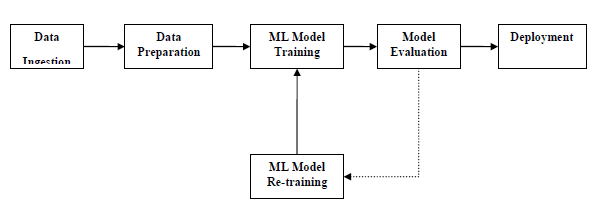

为了成功执行并产生输出,机器学习模型必须使某些标准工作流程自动化,这些标准工作流程的自动化过程可以在Scikit-learn Pipelines的帮助下完成。从数据科学家的角度来看,管道是一个非常重要的概念,基本上允许数据从其原始格式流向一些有用的信息,下图可以帮助理解管道的工作方式-

ML数据准备

从训练数据集到测试数据集是数据科学家在为ML模型准备数据时要处理的重要问题。通常,在准备数据时,数据科学家会在学习之前对整个数据集使用诸如标准化或规范化之类的技术。但是这些技术无法帮助无涯教程避免数据泄漏,因为训练数据集会受到测试数据集中数据规模的影响。

以下是Python中的示例,演示了数据准备和模型判断工作流程,为此,使用了Sklearn的Pima印度糖尿病数据集,首先,将创建标准化数据的管道,然后,将创建线性判别分析模型,最后将使用10倍交叉验证对管道进行判断。

首先,导入所需的软件包,如下所示:

来源:LearnFk无涯教程网

from pandas import read_csv from sklearn.model_selection import KFold from sklearn.model_selection import cross_val_score from sklearn.preprocessing import StandardScaler from sklearn.pipeline import Pipeline from sklearn.discriminant_analysis import LinearDiscriminantAnalysis

现在,需要像之前的示例一样加载Pima糖尿病数据集-

path = r"C:\pima-indians-diabetes.csv" headernames = ['preg', 'plas', 'pres', 'skin', 'test', 'mass', 'pedi', 'age', 'class'] data = read_csv(path, names = headernames) array = data.values

接下来,将在以下代码的帮助下创建管道-

estimators = [] estimators.append(('standardize', StandardScaler())) estimators.append(('lda', LinearDiscriminantAnalysis())) model = Pipeline(estimators)

最后,将判断此管道并输出其准确性,如下所示:

kfold = KFold(n_splits = 20, random_state = 7) results = cross_val_score(model, X, Y, cv = kfold) print(results.mean())

0.7790148448043184上面的输出是数据集上设置准确性的摘要。

ML特征提取

ML模型的特征提取步骤也可能发生数据泄漏,这就是为什么也应该限制特征提取过程以阻止无涯教程的训练数据集中数据泄漏的原因,与数据准备一样,通过使用ML管道,也可以防止这种数据泄漏, ML管道提供的FeatureUnion工具可用于此目的。

以下是Python中的示例,演示了特征提取和模型判断工作流程。为此,使用了Sklearn的Pima印度糖尿病数据集。

首先,将使用PCA(主成分分析)提取3个特征。然后,将使用统计分析提取6个特征。特征提取后,多个特征选择和提取过程的输出将通过组合使用

FeatureUnion工具。最后,将创建Logistic回归模型,并使用10倍交叉验证对管道进行判断。

首先,导入所需的软件包,如下所示:

来源:LearnFk无涯教程网

from pandas import read_csv from sklearn.model_selection import KFold from sklearn.model_selection import cross_val_score from sklearn.pipeline import Pipeline from sklearn.pipeline import FeatureUnion from sklearn.linear_model import LogisticRegression from sklearn.decomposition import PCA from sklearn.feature_selection import SelectKBest

现在,需要像之前的示例一样加载Pima糖尿病数据集-

path = r"C:\pima-indians-diabetes.csv" headernames = ['preg', 'plas', 'pres', 'skin', 'test', 'mass', 'pedi', 'age', 'class'] data = read_csv(path, names = headernames) array = data.values

接下来,将按如下方式创建要素联合-

features = [] features.append(('pca', PCA(n_components=3))) features.append(('select_best', SelectKBest(k=6))) feature_union = FeatureUnion(features)

接下来,将在以下脚本行的帮助下创建管道-

estimators = [] estimators.append(('feature_union', feature_union)) estimators.append(('logistic', LogisticRegression())) model = Pipeline(estimators)

最后,无涯教程将判断此管道并输出其准确性,如下所示:

kfold = KFold(n_splits = 20, random_state = 7) results = cross_val_score(model, X, Y, cv = kfold) print(results.mean())

0.7789811066126855上面的输出是数据集上设置准确性的摘要。

祝学习愉快!(内容编辑有误?请选中要编辑内容 -> 右键 -> 修改 -> 提交!)

《Python机器学习入门教程》

《Python机器学习入门教程》